You have an idea. You're convinced it's valuable. The temptation is to build everything—every feature you've imagined, every enhancement you've envisioned—and launch something complete.

This approach fails more often than it succeeds. It takes too long. It costs too much. And frequently, the assumptions behind all those features turn out to be wrong.

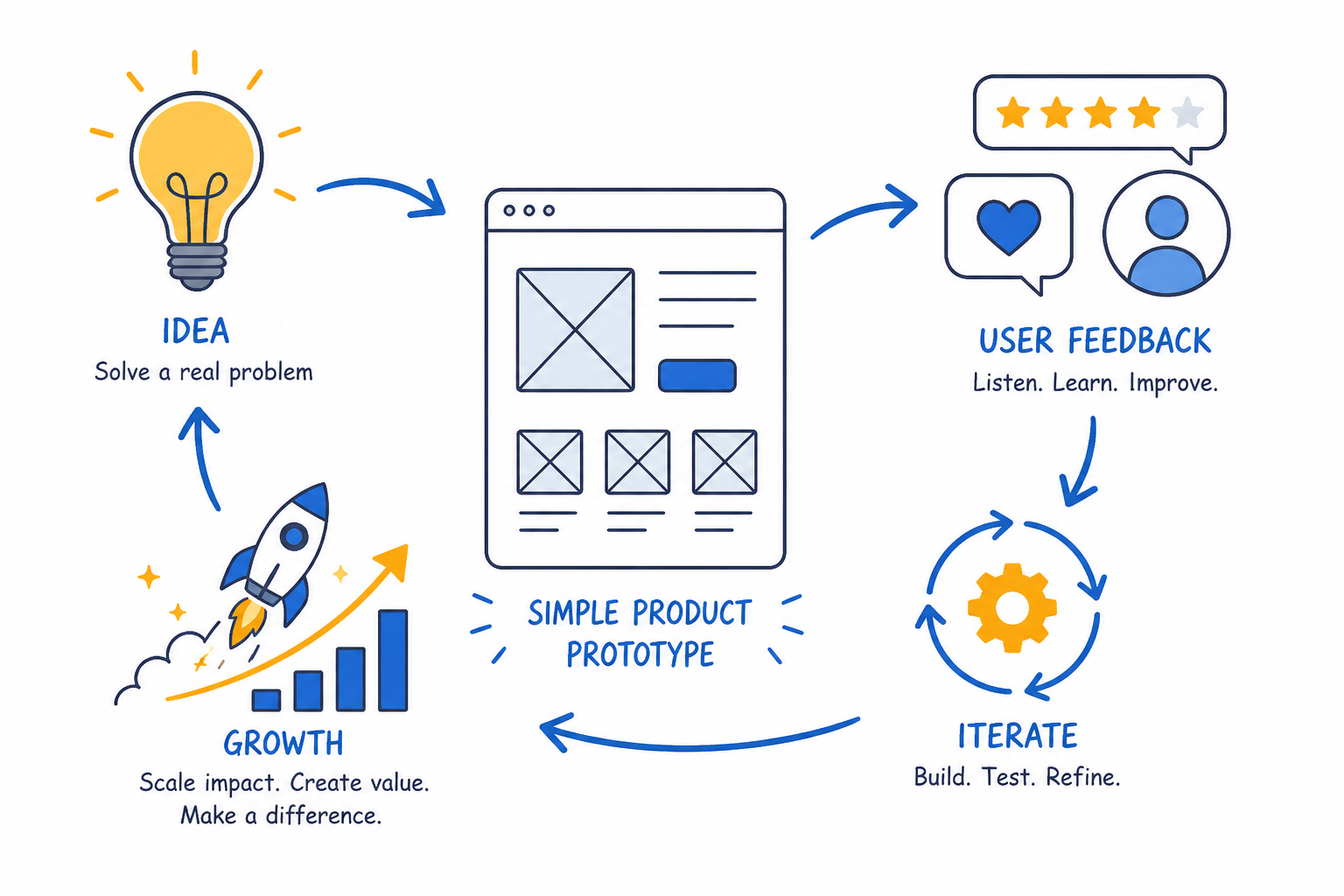

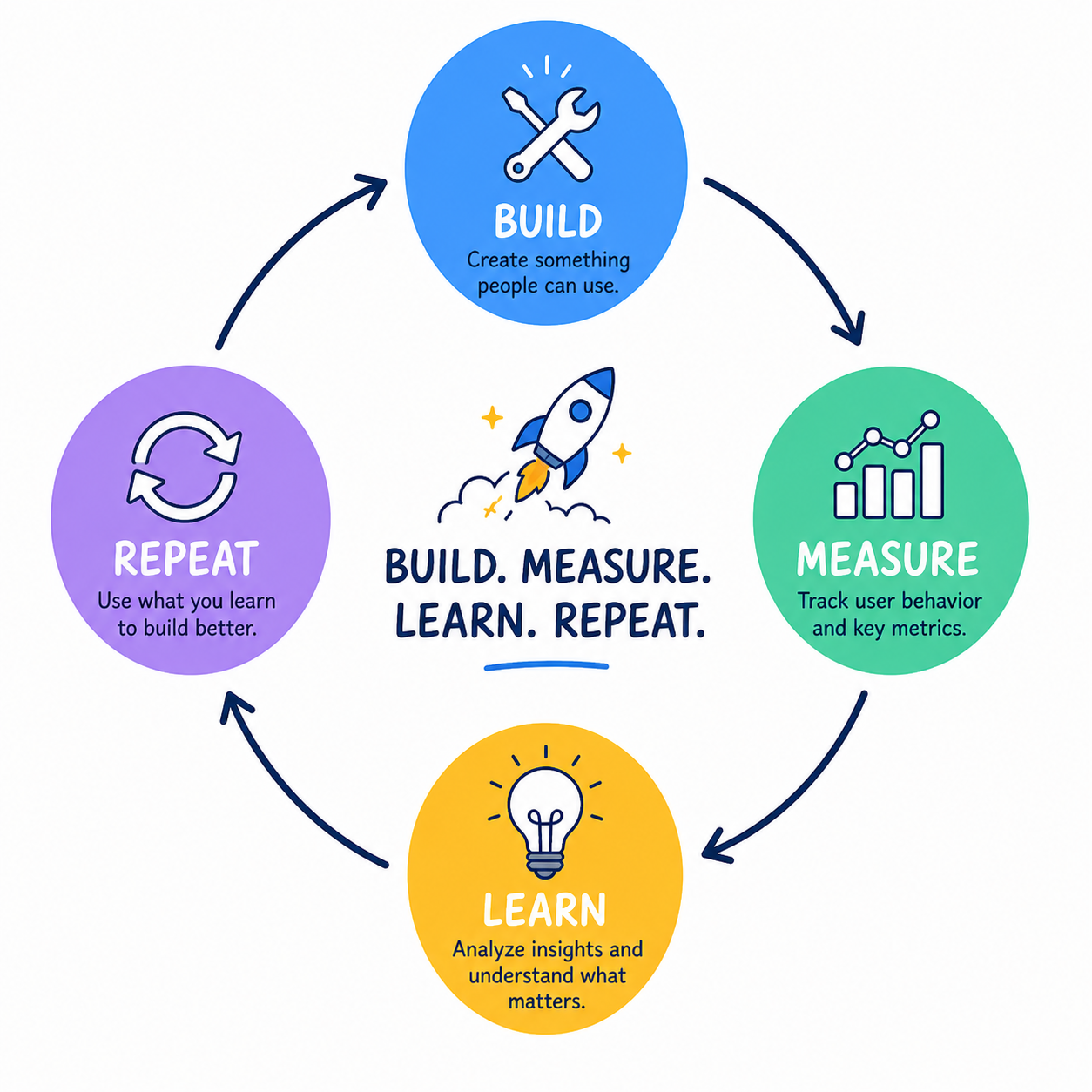

The MVP approach inverts this. Build the smallest thing that tests your hypothesis. Get it in front of real users. Learn what actually matters. Then build more—informed by reality rather than guesses.

This guide covers how to think about MVPs, what to include and exclude, how to build efficiently, and how to learn from what you release.

What an MVP Actually Is

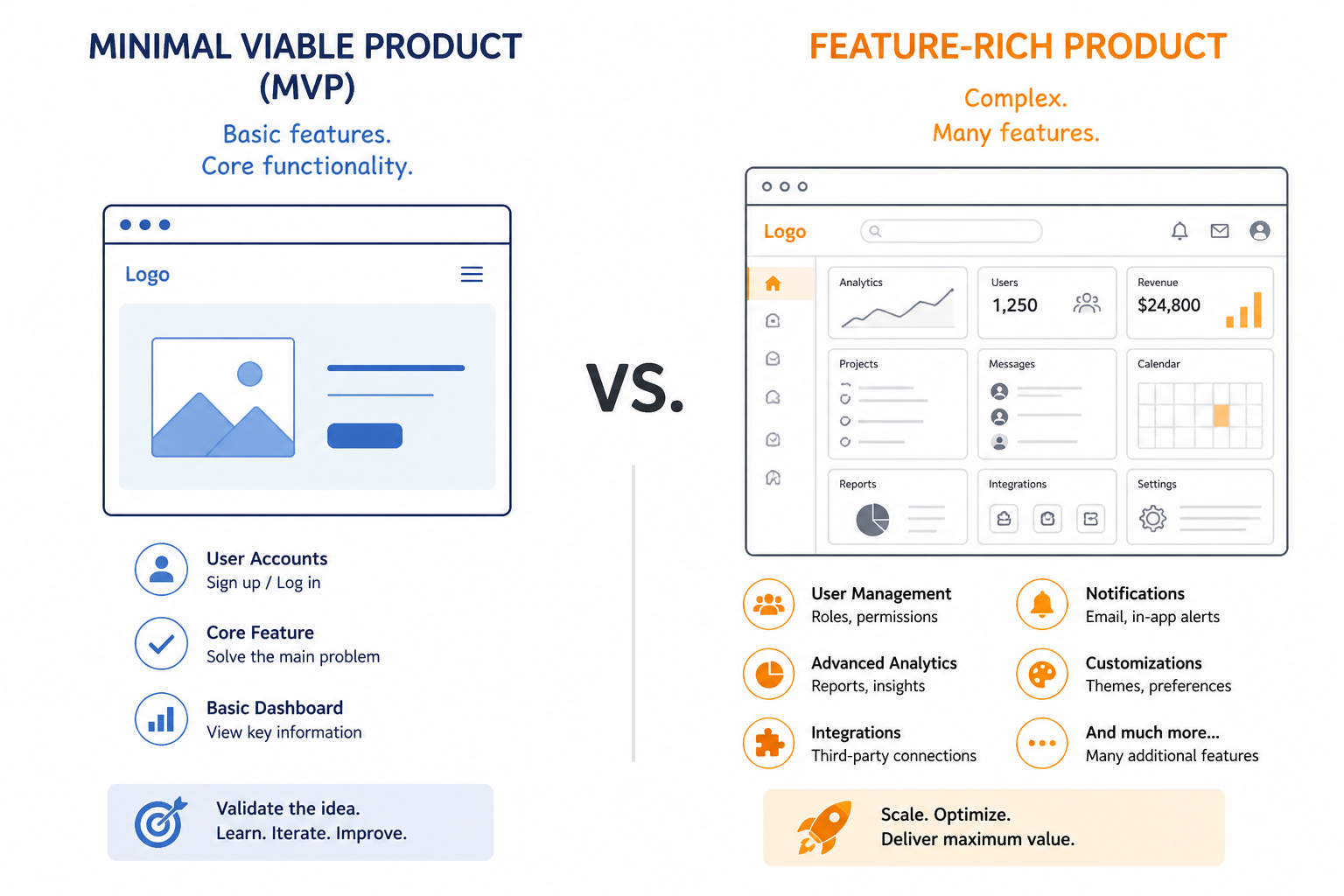

MVP stands for Minimum Viable Product. Each word matters:

Minimum. The least amount of work that could possibly validate your hypothesis. Not minimal in quality—minimal in scope.

Viable. Actually works. Provides real value to real users. Not broken, not incomplete to the point of uselessness.

Product. Something users interact with. Not a document, not a slide deck, not a conversation. An actual product someone can use.

The goal isn't to build a bad version of your full vision. It's to find the core of your idea and test whether that core resonates before investing in the periphery.

Why MVPs Work

You don't know what users want until you see them use it.

Your assumptions about what matters, what users will do, how they'll respond—these are educated guesses at best. Until real users interact with real software, you're theorizing.

MVPs create learning opportunities faster. Instead of spending 18 months building your vision only to discover fundamental assumptions were wrong, you spend 8 weeks testing core hypotheses.

Resources are limited.

Startups have finite time, money, and energy. Spending these resources on features that won't matter wastes them. MVPs focus investment on what actually drives value.

Markets change.

By the time you build everything, the market may have shifted. Competitors may have emerged. Customer needs may have evolved. Shipping faster keeps you responsive to reality.

Defining Your MVP Scope

This is the hardest part. What's truly minimum? What's actually viable?

Start with the Core Problem

What problem are you solving? Not what product are you building—what problem does it solve?

Write it as one sentence:

- "Users struggle to track expenses across multiple accounts"

- "Small businesses can't easily book appointments online"

- "Teams waste time searching for shared documents"

Your MVP must address this core problem. If it doesn't, it's not testing your hypothesis.

Identify Must-Have vs Nice-to-Have

List every feature you've imagined. Now categorize brutally:

Must-have (MVP includes):

- Features without which the core problem isn't solved

- Features required for users to complete their primary goal

- Features that differentiate you enough to be chosen

Nice-to-have (not in MVP):

- Features that enhance but aren't essential

- Edge case handling

- Automation of manual processes

- Premium functionality

Be honest. "Must-have" should mean the product literally doesn't work without it, not "would be worse without it."

Define Success Criteria

Before building, define what you'll learn from the MVP:

- What questions are you trying to answer?

- What behavior indicates validation?

- What would convince you to proceed? Pivot? Stop?

Without clear success criteria, you'll rationalize whatever happens. Define goalposts upfront.

Common MVP Approaches

Different types of MVPs suit different situations:

Concierge MVP

Instead of automating, you do the work manually behind the scenes. Users interact as if using a product, but humans deliver the service.

Example: A food delivery service where the founder personally calls restaurants and delivers orders, before building any technology.

Best for: Testing whether users will pay for a service before investing in automation.

Wizard of Oz MVP

Similar to concierge, but users believe they're interacting with technology. The human work is invisible.

Example: A chatbot that's actually a person typing responses, testing whether conversational interface resonates.

Best for: Testing technology concepts without building the technology.

Single-Feature MVP

Build one feature excellently. Nothing else.

Example: Early Dropbox was just file syncing. Nothing else—but that one thing worked perfectly.

Best for: Testing whether your core feature delivers enough value to build around.

Landing Page MVP

A page explaining what you'll build, capturing email signups or even pre-orders.

Example: Buffer started with a landing page explaining pricing before any code existed.

Best for: Testing demand before building anything.

Piecemeal MVP

Stitch together existing tools to simulate your product.

Example: Using Typeform for input, Zapier for automation, and email for delivery before building custom software.

Best for: Testing workflows and value propositions quickly.

Building the MVP

Once scope is defined, execution matters.

Choose Speed Over Perfection

Code quality, architectural elegance, comprehensive test coverage—these matter for products you'll maintain for years. For MVPs, they matter less.

Ship faster. Learn faster. If the MVP validates, you'll likely rewrite much of it anyway once you understand requirements better.

This doesn't mean write broken code. It means don't over-engineer for scale you haven't proven you'll need.

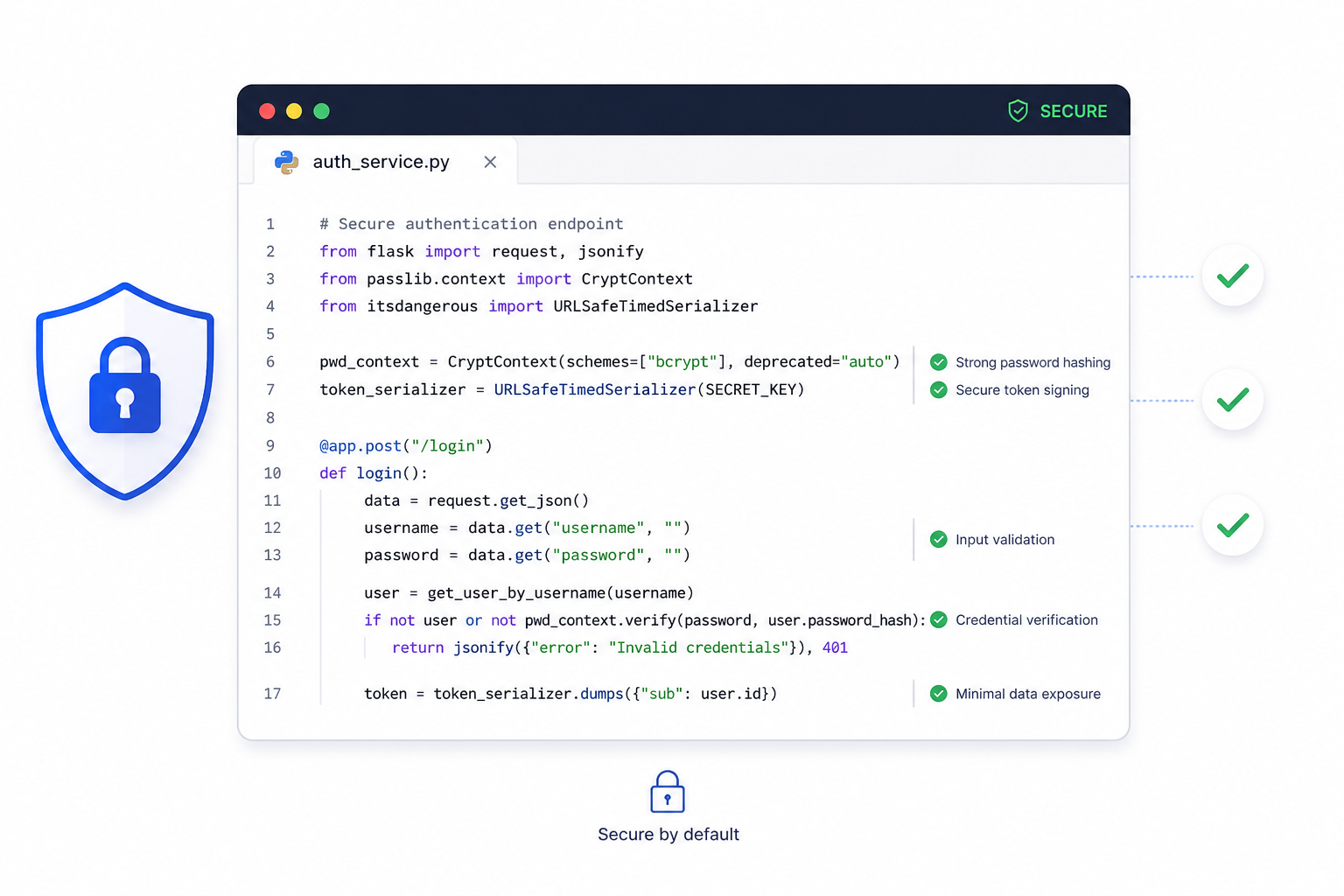

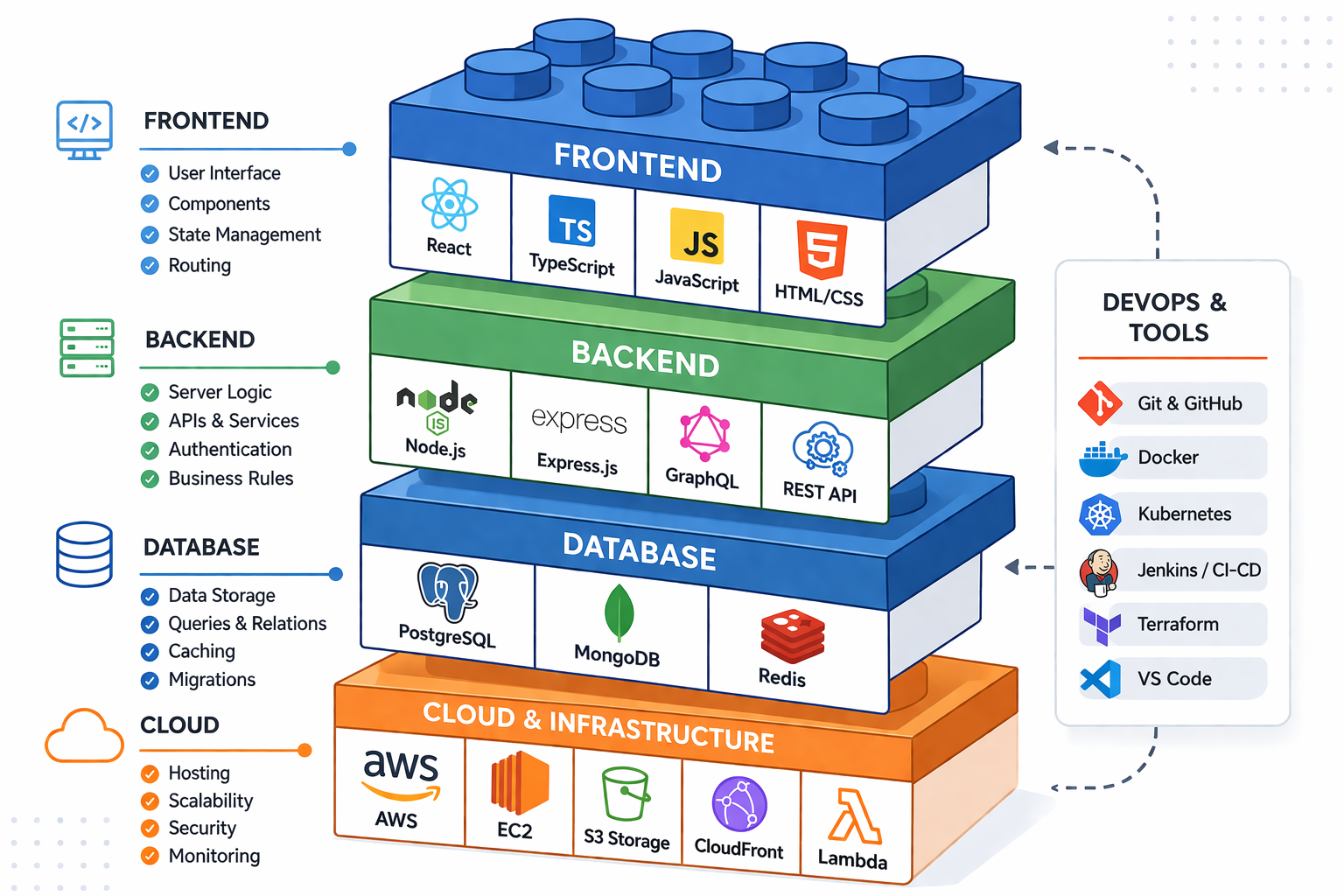

Use Existing Tools and Services

Don't build what you can buy or integrate:

- Authentication? Use Auth0, Firebase Auth, or similar

- Payments? Stripe, Razorpay

- Email? SendGrid, Mailgun

- Database? Managed services

- Hosting? Platform as a Service

Every integration you don't build is time saved for building your actual differentiator.

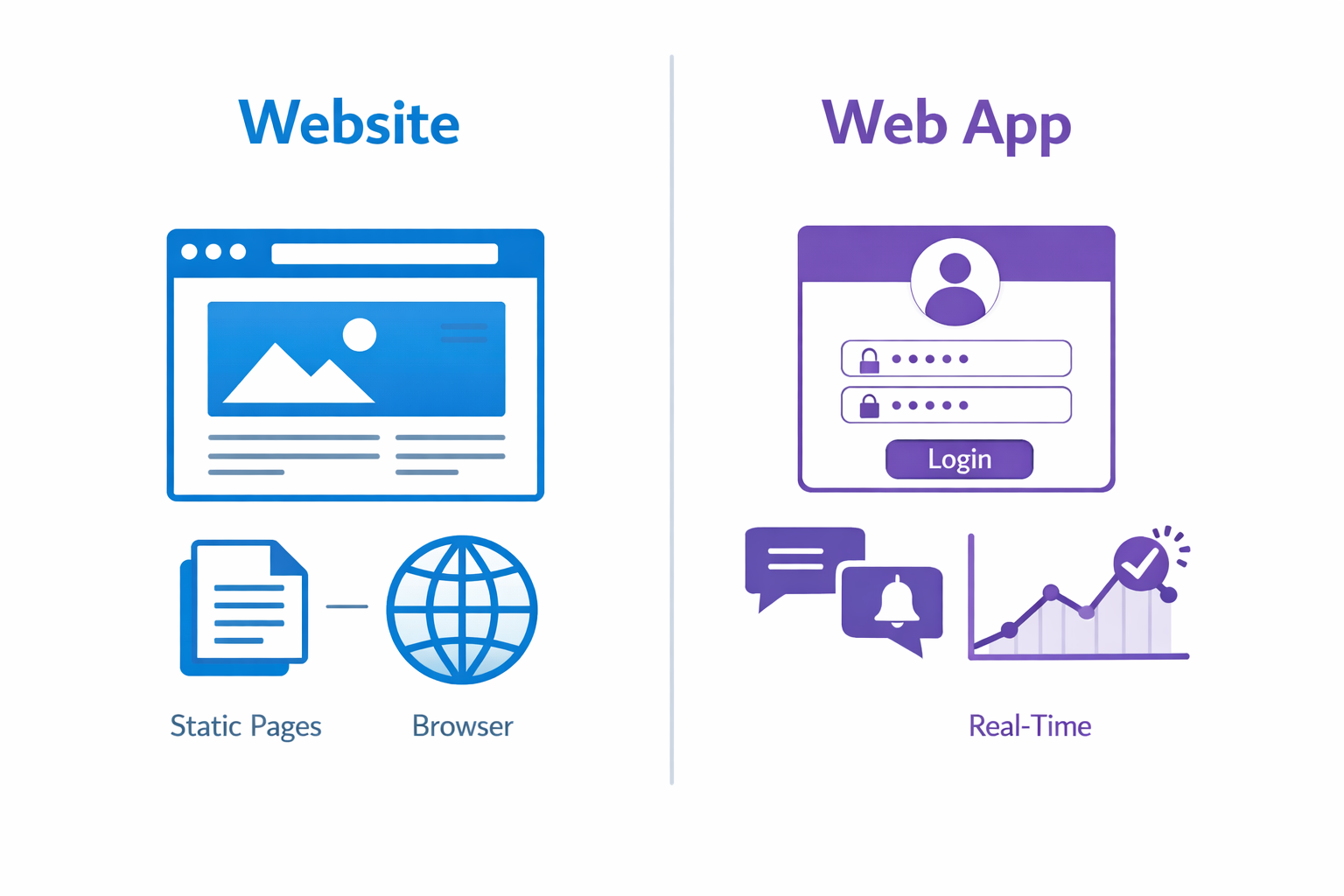

Limit Platforms Initially

Don't build iOS app, Android app, and responsive web simultaneously. Pick one platform where your target users are concentrated. Expand later if validated.

For most startups, a responsive web app is the fastest path. No app store approval, updates deploy instantly, works on any device.

Set a Time Constraint

Open-ended development expands to fill available time. Set a deadline—6 weeks, 8 weeks—and ship whatever is ready.

This forces prioritization decisions. Features compete for limited time. What actually matters rises to the top.

Launching and Learning

The MVP isn't done when you ship. It's done when you've learned.

Get in Front of Real Users

Not friends and family who'll be polite. Real potential customers who have the problem you're solving.

Find them:

- Online communities where your target users gather

- Social media platforms they use

- Cold outreach to likely prospects

- Beta programs or early access offers

Measure What Matters

Define metrics before launch:

- Engagement: Are users actually using it? How often? For how long?

- Completion: Do they achieve their goal?

- Retention: Do they come back?

- Payment: Will they pay? (If monetization is part of MVP)

- Referral: Do they tell others?

Vanity metrics (signups, page views) feel good but mean little. Focus on metrics indicating genuine value delivery.

Talk to Users

Quantitative data shows what's happening. Qualitative feedback explains why.

Have actual conversations:

- What were you trying to accomplish?

- What was confusing?

- What's missing?

- Would you recommend this to others? Why/why not?

Five good user conversations often reveal more than weeks of analytics analysis.

Be Ready to Pivot

MVPs sometimes validate assumptions. More often, they reveal that assumptions need adjustment.

Maybe the problem is real but your solution isn't right. Maybe you're solving the right problem for the wrong audience. Maybe a feature you thought was secondary is actually the main event.

This isn't failure—it's the point. Learning earlier costs less than learning after full build.

Common MVP Mistakes

Building Too Much

"But we need just one more feature for it to make sense." This is the MVP death spiral. Resist it.

If your MVP needs 47 features to be viable, either your definition of viable is wrong, or your product is too complex for MVP validation.

Building Too Little

Equally problematic: shipping something so broken or incomplete that it can't actually test the hypothesis.

Users need to be able to accomplish their goal to give meaningful feedback. A checkout flow that crashes doesn't tell you whether people want to buy your product.

Ignoring Quality Where It Matters

Speed doesn't mean sloppiness. Users judge you on experience. If the MVP is so buggy or confusing that users can't form opinions about your value proposition, you learn nothing.

Focus quality investment on the critical path—the main user journey you're testing.

Not Actually Launching

Some MVPs languish in "almost ready" indefinitely. There's always one more thing to improve first.

Set a date. Ship whatever exists. Perfect is the enemy of done.

Not Listening to Feedback

Building an MVP then ignoring what users say defeats the purpose. If you're going to rationalize away negative feedback and build what you wanted anyway, skip the MVP and accept the risk.

After the MVP: What Next?

MVP results lead to different paths:

Strong validation: Users love it, engagement is high, they're asking for more. Build the next iteration. Add carefully—still focusing on validated needs over assumptions.

Mixed signals: Some things work, others don't. Iterate on the MVP. Adjust what's not working. Retest.

Weak validation: Low engagement, users don't get it, they're not paying. Consider pivoting—same problem, different solution; or same solution, different problem. Or acknowledge the idea isn't working and move on.

Clear failure: Nobody wants this. The problem you're solving isn't painful enough, or your approach is fundamentally wrong. This is valuable information. Cheaper to learn in 8 weeks than 18 months.

Final Thoughts

MVPs aren't about building cheap or building bad. They're about learning fast.

The startup world is full of products that seemed like good ideas but weren't—discovered after years of development and millions of dollars. MVPs exist to compress that learning into weeks.

Build the minimum that tests your hypothesis. Ship it. Measure it. Learn from it. Then decide what to build next with actual data, not just hope.

Building a startup and need help developing your MVP? Duo Dev Technologies helps startups build focused, functional MVPs quickly. Let's talk about your idea.